Improving study attendance for an early stage product

A UX research project focused on participant recruitment, usability testing, and research operations that reduced no-shows from 60–70% to 5–10% through improved workflows

Project Overview

Role

UX Researcher

Focus

Participant recruitment, usability testing, research operations

Outcome

Improved attendance workflow and reduced no-shows from 50–60% to 5–10%

The Challenge

The company was developing a product in the virtual reality space and needed participant data to support early product development.

The team relied on recurring user testing sessions, but the process faced operational challenges around participant recruitment, attendance, communication, and live-session reliability.

One of the biggest issues was attendance. Participant no-shows were initially as high as 50–60%, making it harder for the team to run sessions efficiently, collect consistent data, and maintain a dependable research workflow.

My Role

I supported the operational side of user testing from end to end, helping the team recruit participants, manage scheduling, facilitate live sessions, and improve the overall reliability of the testing process.

My responsibilities included

Setting up and managing study recruitment through User Interviews

Screening and approving participants based on defined criteria

Coordinating participant communication and scheduling

Facilitating live testing sessions and guiding participants through the process

Monitoring data collection quality and troubleshooting issues during sessions

Sharing feedback with engineers and product partners to improve the testing workflow

Research Operations Workflow

Study Setup and Recruitment

I created and managed the participant-facing study setup in User Interviews, including the study description, participation requirements, session expectations, and logistical details. This helped clearly communicate what the study involved and ensured applicants understood the testing process before signing up.

Screening and Scheduling

Once participants applied, I reviewed submissions against study criteria and approved qualified participants for available time slots. Scheduling was managed on a rolling basis, with session availability opened weekly to better align with attendance patterns and team capacity.

Participant Communication

I handled participant communication throughout the process, from approval and scheduling through reminders and pre-session logistics. This communication later became a key area of improvement as I worked to reduce no-shows and make attendance more reliable.

What Wasn’t Working

As I observed the workflow over time, it became clear that the testing process was not failing because of participant interest, but because of how the scheduling and communication system was structured.

Participants often signed up too far in advance, received limited reminders, and forgot about their sessions. This created unnecessary drop-off and made it difficult to plan studies confidently or collect data consistently.

My Approach

To make the process more dependable, I redesigned key parts of the participant workflow.

Shorter Scheduling Windows

Instead of approving participants too far in advance, I began approving them in batches and opening time slots only one week at a time. This helped participants book within a more realistic planning window and made them more likely to remember and attend their session.

Redesigning Communication Touchpoints

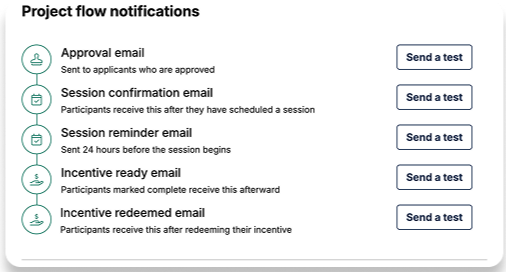

Previously, participants received only a single reminder email the day before their appointment. I helped create a more intentional communication flow by introducing:

a logistics email two days before the session

a manual confirmation email one day before the session

This improved recall without overwhelming participants with too many messages.

Smarter Recruitment Decisions

I also incorporated participant history and past-study patterns more thoughtfully into the recruitment process when possible. This helped improve the quality and reliability of participant selection over time.

Together, these changes made the attendance workflow more structured, predictable, and effective.

Facilitating Live Testing

In addition to recruitment and scheduling, I supported the live testing experience on-site.

My role included:

welcoming participants and explaining the process

helping them set up testing equipment

guiding them through each stage of the session

monitoring whether the collected data was valid and usable

completing safety checks before light-based facial scanning

The sessions included multiple tasks such as guided facial-expression prompts, conversation-based responses, reading-based tasks, and a 3D facial scan. Throughout the process, I made sure participants felt informed and supported while helping maintain session quality.

Collaboration and Troubleshooting

Because the testing sessions involved both hardware and software, I regularly troubleshot issues in real time to keep sessions running smoothly. This included resolving basic problems related to participant instructions and data-collection tools, and escalating to engineers when needed.

I also shared recurring participant feedback and operational observations with engineers and the product team to help improve the testing workflow over time.

What I Learned

This project showed me that strong UX research depends not only on good questions, but also on strong operations. Recruitment strategy, communication timing, scheduling design, and session reliability all directly affect the quality of research outcomes.

It also reinforced how much value comes from improving the process around research. By making attendance and testing more reliable, I was able to support better data collection for both participants and the team.